The AI Winner is Decided by Power : Energy Geopolitics and Japan's Reversal Scenario for 2030

The AI battlefield is shifting from algorithms to physical infrastructure. By 2026, AI power consumption will rival an entire nation's, making energy the new currency for business success.

Chapter 1: The Reality of the AI Bubble is a "Scramble for Energy"

In 2026, Digital Evolution Collides with Physical Limits

The rapid proliferation of AI currently underway is not merely a competition of algorithms. It has transformed into a physical phenomenon with a profound impact on global power infrastructure.

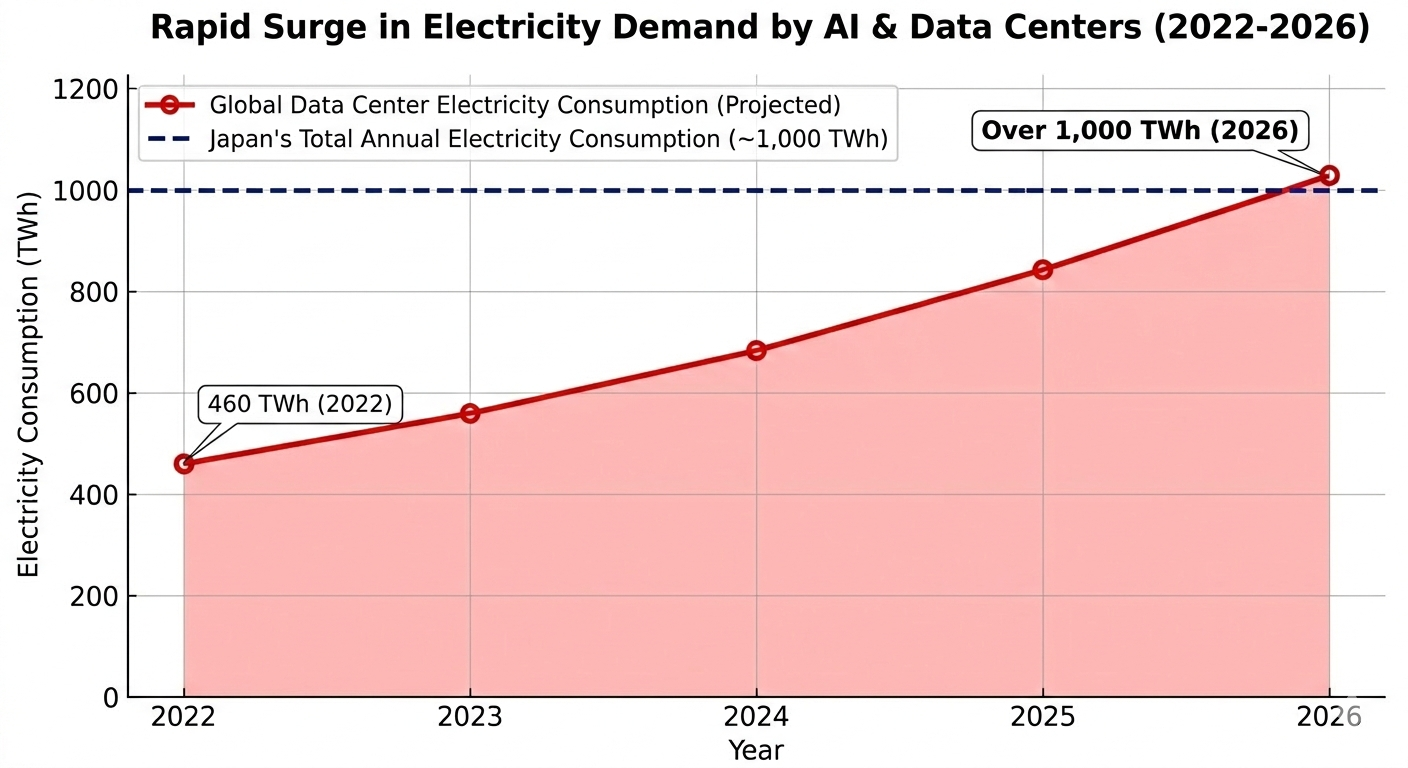

According to forecasts by the International Energy Agency (IEA), electricity consumption by data centers, AI, and the cryptocurrency sector is expected to exceed 1,000 TWh (terawatt-hours) globally by 2026. This 1,000 TWh figure is roughly equivalent to the annual electricity consumption of the entire nation of Japan. Considering that consumption was approximately 460 TWh in 2022, demand is set to double in just a few years. AI has moved beyond the realm of software, strengthening its aspect as a massive physical industry requiring enormous amounts of energy.

AI Power Consumption Forecast 2026: Why Data Center Power Shortages are Becoming Severe

Until now, the main battlefield for AI development has been the number of model parameters and the superiority of algorithms. However, the axis of competition is shifting toward securing real estate and power grids. No matter how superior an AI model you develop, if you cannot secure the power to run it, it will not be viable as a business.

This physical constraint has already become a serious bottleneck.

- United States: Wait times of four to eight years for connections to new power grids are occurring.

- Japan: In the Tokyo metropolitan area, where demand is concentrated, there are cases where companies are forced to wait up to 36 months for new data center construction.In modern business, victory or defeat is determined less by the technical skills of engineers and more by geopolitical resource acquisition—specifically, how quickly and reliably one can secure locations with guaranteed power.

A unit representing the scale of energy. 1 TWh equals 1 billion kWh, equivalent to the annual power consumption of approximately 300,000 general households. It is predicted that by 2026, AI-related demand alone will consume as much power as the entire nation of Japan.

Power as a Management Resource Changes Investment Criteria

Going forward, the competitiveness of an AI business will be defined not by the sheer volume of computational resources, but by the ability to secure inexpensive and stable power.

What becomes crucial here is shifting the focus of investment from quantity to quality (efficiency). While hyperscalers like GAFAM monopolize computational resources through capital power, the key to sustainable growth lies in new technologies that achieve power efficiency.

- Next-Generation Architectures: Technologies that suppress energy consumption, such as in-memory computing and neuromorphic computing, are attracting attention.

- Transformation of Investment Targets: The focus of AI-related stocks for investors and analysts is shifting from software companies to energy, infrastructure, and new material companies.

Geopolitical Risks Exposed by Supply Chain Vulnerabilities

In the sustainability of AI, the management of supply chains—including power grids and rare metals (critical minerals)—is even more important than securing semiconductor chips.

Critical minerals such as nickel, lithium, and gallium are concentrated in specific countries and are directly affected by geopolitical tensions and trade regulations. There is a risk of "Single Point of Failure," where the stagnation of a single point in the supply chain causes the entire AI infrastructure to malfunction.

For business leaders formulating medium- to long-term strategies, AI is not just a tool for operational efficiency. Decisions must be made based on a predicted reorganization of industrial structures, such as utilizing Edge AI or diversifying bases to regions with abundant power resources, assuming the physical wall of energy constraints.

Chapter 2: The New Equation of "Quantity" and "Quality" Determining AI's True Value

Suppliable Power Defines the Limits of Intelligence

To objectively evaluate the value of an AI business, one must look directly at the physical constraints supporting it, not just the evolution of software. The value of future AI businesses will be defined by the following correlation:

AI Value = Scalable Power Supply (Quantity) × Performance per Watt (Quality)

Until now, AI evolution has been driven by the input of massive computational resources and power—that is, the expansion of quantity. However, as stated in Chapter 1, now that physical power infrastructure is reaching its limits, "Suppliable Power (Quantity)" is no longer a variable that can be increased at will, but a constant that limits the scale of business. The variable in the future AI race has completely shifted to improving quality—how to increase computational performance per watt.

Power Becomes a Scarce Resource Dictating Business Continuity

This structural change has dramatically altered the factors determining AI performance. It is no longer just the skill of engineers with algorithms; the ability to secure inexpensive and stable power faster than competitors has become an extremely critical factor in business success.

In the current business environment, the following is occurring:

- Prolonged Connection Wait Times: In the U.S., Europe, and Japan, wait times for data center power connections are measured in years.

- Risk of Market Dropout: Companies that cannot secure power will be forced to retreat from the market due to physical constraints, regardless of how excellent their AI models are.For executives and DX (Digital Transformation) managers, AI implementation is no longer just a task for the IT department; it is a management decision that should be viewed as an infrastructure strategy for energy procurement itself.

A Shift to New Indicators for AI Investment: Computational Efficiency per Watt (Quality)

Against global companies that monopolize quantity through massive capital, the key for other companies to secure an advantage lies in the pursuit of power efficiency (quality). While expanding power infrastructure takes a long time, the speed of efficiency improvement through hardware and algorithms exceeds it.

- Dramatic Reduction in Computational Costs: By combining efficiency technologies such as architectural optimization, model distillation, and the introduction of AI-specific ASICs, rather than relying solely on hardware evolution, it is theoretically possible to double computational efficiency (halve costs) in 8 to 9-month spans for specific benchmarks. However, note that this is not a steady evolutionary speed like "Moore's Law," but a specialized evolution based on the close integration of specific algorithms and hardware.

- Expectations for Next-Generation Technology: The practical application of in-memory computing, which significantly reduces energy consumption, and neuromorphic computing, which mimics the structure of the brain, is progressing.The evaluation criteria for investors and analysts are also shifting from software feature comparisons to companies handling cooling technologies that reduce Power Usage Effectiveness (PUE) and next-generation semiconductor devices with ultra-low power consumption.

A numerical value obtained by dividing the total power consumption of a data center by the power consumption of the IT equipment itself. The closer it is to 1.0, the less incidental power (such as cooling) is used, indicating better efficiency. In the latest designs, achieving a PUE below 1.1 is the goal.

The AI Supply Chain as a Geopolitical Risk

In drawing a medium- to long-term roadmap, the vulnerability of the AI supply chain is a risk that cannot be ignored. The sustainability of AI is directly linked not only to securing advanced chips but also to the stable procurement of the power to run them and the critical minerals essential for infrastructure.

These resources are susceptible to geopolitical tensions and trade regulations; if part of the supply chain stagnates, the growth scenario driven by AI will stall. To ensure business continuity, the following risk hedges are essential:

- Diversification of Bases: Considering the placement of data centers in regions where power is abundant and stable.

- Architectural Shift: A structural shift from centralized Cloud AI to Edge AI, which performs power-saving processing at the source of the data.A strategy that assumes the physical wall of energy constraints and extracts maximum processing power from limited resources will become the standard in next-generation AI business.

Chapter 3: Strategy Map of "AI x Power" by Major Regions

The Source of Intelligence Shifts from Software to Physical Resources

Now that the source of AI intelligence has shifted from software to physical resources like power, countries worldwide are engaging in energy acquisition races tailored to their respective national circumstances. The U.S. and China aim to overwhelm with the quantity of computational resources, Europe uses regulations as a catalyst to set quality standards, and Japan aims for a reversal through technological innovation in efficiency. These strategic differences are important turning points that will determine future industrial structures.

1. The Quantity Strategy of the U.S. and China: SMRs and Next-Gen Energy Investment

The U.S. and China, at the forefront of the AI race, define AI performance by the volume of computational resources and are moving to secure massive power grids to support them.

- USA: Massive Investment in Next-Gen EnergyIn addition to abundant natural gas, investment in next-generation nuclear power, including Small Modular Reactors (SMRs) by hyperscalers, is accelerating. The government is also supporting power supply nationwide by expediting permits for data center construction through executive orders.

- China: Resource Optimization via National ProjectsWhile maintaining inexpensive and massive power through coal-fired generation in the short term, China is promoting the "East Data, West Computing" project, leveraging its vast land. By transferring computational loads to western regions where renewable energy is abundant, they aim to break through the limits of supply capacity.For both countries, maintaining the AI supply chain is directly linked to national security. Geopolitical risks—securing not just semiconductors but also the critical minerals and energy resources that support power infrastructure—have entered a stage where they dictate the continuity of AI business.

2. EU: High-Quality, High-Regulation Route Centered on Sustainability

Europe, where energy costs are higher than in other regions, is adopting a strategy that defines the quality of AI through sustainability, rather than chasing the quantity of power.

- Creating Value Through RegulationThe EU's Energy Efficiency Directive (EED) mandates data centers to disclose energy consumption and reuse waste heat. These regulations are a strategic move to differentiate European AI as clean and reliable.

- Shift in Investment TargetsFor investors, the focus of European AI-related stocks is shifting from software companies to green technology companies equipped with advanced waste heat management and high-efficiency infrastructure.

3. Japan's Reversal Strategy: Innovation in Power Efficiency via Optoelectronic Fusion (IOWN)

Japan faces severe constraints: limited land and energy resources, and prolonged wait times for power grid connections. The key to breaking through this physical wall is Optoelectronic Fusion technology (such as IOWN).

Japan's strategy is to differentiate itself through power efficiency (quality) against global companies with massive computational resources (quantity).

- Significant Reduction in Power ConsumptionBy introducing light-based network computing technology, it is a realistic goal to drastically reduce power consumption compared to conventional data centers.

- Maximization of IntelligenceAs suppliable power hits a ceiling worldwide, this approach of maximizing intelligence per watt has the potential to create a strong competitive advantage in a future of tight energy constraints.

A next-generation information and communication infrastructure proposed by NTT. It is a technology that replaces conventional electrical signal processing with "light," achieving overwhelmingly low power consumption, high bandwidth, and low latency. It is expected to be the "trump card" to drastically lower the power load of AI processing.

Strategic Investment Anticipating Changes in Industrial Structure

Strategies for obtaining power resources vary by region. Business leaders and investors must have the following perspectives:

- Management: Evaluate the regional energy strategy (focus on quantity or efficiency) as a major management risk and opportunity when selecting locations.

- Investors: Shift focus from software feature comparisons to companies with connectivity to power grids, liquid cooling technologies that enhance cooling efficiency, and new materials.

- Strategists: Predict how local production for local consumption of power and distributed processing via Edge AI will replace centralized cloud models over the next 10 years, and rebuild roadmaps accordingly.

| Region & Strategy Type | Key Strategy | Physical Infrastructure Approach | Long-term Target |

|---|---|---|---|

|

USA (Quantity & Infrastructure-centric) 🇺🇸 |

Next-Gen Energy Investment Ensuring Stable Supply |

• Early commercialization of SMRs (Small Modular Reactors). • Life extension and restarting of existing nuclear power plants. • Expediting permits for data center construction. |

Dominating Computing Resources as a Pillar of National Security |

|

China (Quantity & State Optimization) 🇨🇳 |

"East Data, West Computing" Resource Allocation Optimization |

• Transferring data from eastern cities to utilize renewable energy in the west. • Maintaining coal power in the short term while maximizing supply capacity. |

Breaking Resource Constraints via Optimized National Intelligence Mapping |

|

Japan (Quality & Efficiency-centric) 🇯🇵 |

Photonics-Electronics Convergence (IOWN) Maximizing Intelligence per Watt |

• 40% reduction in power consumption via IOWN implementation. • Pursuing PUE limits with liquid and immersion cooling. • Decentralizing hubs (Hokkaido/Kyushu) via Corporate PPAs. |

Becoming the World's Most Efficient AI Infrastructure Nation |

Chapter 4: Three "Brakes" Business Leaders Must Guard Against

Physical and Geopolitical Limits Hindering the AI Economic Zone

As AI shifts to an economic zone driven by power, strategic success requires a correct recognition of the physical and geopolitical constraints hidden behind technological innovation. We explain three serious risks that executives and investors will face from a supply chain perspective.

1. AI Semiconductor Supply Chain Risk: HBM and the Reality of Chip Monopolization

Even if power for AI infrastructure is secured, business cannot function if the hardware for computational processing—namely GPUs and high-performance memory—is unavailable. In particular, HBM (High Bandwidth Memory), which improves data transfer speeds, is an indispensable component for training large-scale AI models.

- Monopolization by Capital Power: Global hyperscalers with enormous capital are effectively monopolizing advanced device manufacturing capacity through advance reservations.

- Impact on Management: As dependence on specific advanced chips grows, latecomers risk being unable to procure chips even if they have power. Securing the supply chain early must be a top management priority.

Semiconductor memory that dramatically increases data transfer speeds by stacking memory vertically. It is an essential component for generative AI training, and currently, supply monopolization by specific manufacturers is a challenge.

2. Rise of Resource Nationalism: Export Restrictions on Gallium/Rare Metals and Geopolitical Risks

As the AI competition shifts from quantity to power efficiency (quality), the importance of next-generation semiconductor materials such as GaN (Gallium Nitride) and SiC (Silicon Carbide), which suppress power loss, is increasing. While these play a crucial role in data center power saving, their supply chains are extremely fragile.

- Uneven Distribution of Strategic Resources: Critical minerals like gallium and rare metals are concentrated in specific countries, posing a constant risk of export restrictions due to geopolitical tensions.

- Shift in Evaluation Criteria: Investors and analysts should adopt a new evaluation criterion: how well a company can maintain stable procurement networks for new materials, either internally or within allied nations.

3. Deterioration of Capital Efficiency: Lower Profitability due to Rising Resource Costs

The third factor threatening the sustainability of AI business is the rapid deterioration of Return on Investment (ROI). AI data centers consume as much or more energy than heavy industries like aluminum smelters.

- The Profitability Trap: Due to rising fuel prices, power expenditures for data centers have risen significantly. In the calculation of how much value can be generated from one watt of power, there is a risk that rising resource costs will negate the efficiency gains from technological innovation.

- Strategic Recommendation: Management should recalculate AI costs not just as license fees, but as infrastructure maintenance costs that include energy volatility risks. Furthermore, a shift to distributed Edge AI, which can keep energy costs down, is an indispensable strategy from the perspective of capital efficiency.

【Column】 Concrete Measures for Japanese Companies to Reverse the Tide via "Quality"

—Infrastructure Strategies to Break Through the "2026 Physical Wall"

Will Optoelectronic Fusion Technology Be Ready for the "2026 Wall"?

To be blunt, it must be said that the possibility of Optoelectronic Fusion technology (IOWN, etc.) being fully ready for the explosive increase in power demand (the physical wall) faced in 2026 is low.

This technology is a "medium- to long-term trump card" looking toward 2030 and beyond; it is currently still at the Technology Readiness Level (TRL) stage. Practical hurdles remain, such as the lack of a commercial manufacturing platform, reliability issues, and the cost of retrofitting existing infrastructure.

Therefore, business leaders must not only wait for future technology to be completed but also immediately implement "practical and effective measures" to overcome the 2026 crisis.

Four Practical Approaches Japan Should Implement Immediately

1.Fundamental Review of Power Procurement Strategy (Hedging Cost and Supply)

Take measures to directly avoid supply anxiety without relying solely on conventional retail plans.

- Direct Procurement from Wholesale Power Markets: Perform procurement linked to market prices and optimize operations using AI-driven price forecasting.

- Utilization of Corporate PPAs (Power Purchase Agreements): Sign long-term fixed-price contracts with renewable energy providers to offset fuel price hike risks while preferentially securing green power.

2.Departure from "Tokyo Centralization" (Base Diversification Strategy)

To avoid wait times for grid connections in the Tokyo area (up to 36 months), move bases to regions with surplus resources.

- Diversification to Cold Regions and Renewable-Suitable Areas: Hasten distributed placement to Hokkaido (Tomakomai, etc.), which has surplus grid capacity and benefits from outside air cooling, or Kyushu, which has high renewable energy supply capacity.

- Local Production for Local Consumption of Power: Combine onsite solar power generation and Battery Energy Storage Systems (BESS) to promote the introduction of autonomous power sources.

3.Shift in Computational Architecture (Transition to Edge AI)

Avoid concentrating all processing in massive cloud data centers and distribute the physical load.

- Load Reduction via Edge AI: Proliferate "Edge AI" that processes data immediately at factories, in vehicles, or on devices to suppress communication and calculation loads on central data centers. This is a realistic means of increasing intelligence productivity in environments where power grids are saturated.

4.Advanced Operation and Heat Management Technology (Pursuing the Limits of Existing Equipment)

Maximize efficiency (quality) with current equipment without waiting for next-generation technology.

- Precise Heat Management and Liquid Cooling: By introducing immersion cooling or liquid cooling (cold plates), bring PUE close to the theoretical limit of 1.05 or less. While this is becoming a standard goal for new data centers, strategic replacement plans considering ROI are essential for existing air-cooled data centers, as they require large-scale renovation investment.

- Demand Response Implementation: Flexibly adjust the load on the power grid by limiting server power or supplying power from storage batteries during peak demand periods.

A "Two-Pronged" Approach of Future Tech and Reality

While Optoelectronic Fusion technology holds the potential to fundamentally solve AI's energy constraints in the future, social implementation will only become full-scale around 2030.

The key to surviving the 2026 crisis lies not in placing excessive expectations on future technology, but in whether "practical infrastructure strategies"—such as power procurement, site selection, and distributed processing at the edge—can be executed as top management priorities.

Chapter 5: [Summary] Those Who Know Infrastructure Will Rule the AI Business

From the "Era of Software" to the "Era of Physical Infrastructure and Energy"

Until now, discussions of AI business have centered on the "software" domain, such as the evolution of algorithms and the convenience of user interfaces. However, what we are currently facing is the entry into the "Era of Hardware and Infrastructure," where AI demands excessive physical energy and hardware, rewriting existing social foundations.

AI data centers have already begun to consume power equivalent to heavy industry, and in specific regions, the surge in power demand is exposing the limits of the power grid. The battle for AI supremacy is no longer completed by code writers alone. A paradigm shift is occurring—"how cheaply and stably can power be secured"—and power has transformed into the "new currency" that determines AI performance.

Breaking the "Wall of Quantity" with "Practical Quality"

Against global companies deploying "quantity" strategies backed by massive computational resources, the path Japan should take—as detailed in the Chapter 4 column—is a shift in investment toward "Power Efficiency (Quality)."

In the medium to long term, Optoelectronic Fusion technology (IOWN, etc.) will be a powerful solution, but for the looming 2026 crisis, practical measures with immediate effect are essential. Introducing liquid cooling to reduce PUE, diversifying bases to regions where energy costs can be suppressed, and shifting to Edge AI to reduce the load on data centers. Japan’s chance for victory lies in the "competition of density"—how much intelligence can be generated from limited energy.

Geopolitical Resilience: Supply Chains that Dictate Business Continuity

The "comprehensive AI supply chain risk"—extending beyond securing semiconductor chips—is what management should be most wary of in future AI strategies.

The stability of the power infrastructure that runs AI, as well as the procurement networks for strategic resources like gallium and rare metals essential for next-generation devices, now function as geopolitical weapons between nations. When considering AI implementation or building in-house data centers, the method of calculating only software costs is no longer valid. "Long-term fluctuations in energy costs" and "supply chain resilience" must be integrated as top-priority management risks.

Recommendations for 2030: Risk Hedging through Power Procurement and Edge AI

To ensure the sustainability of AI business, three perspectives must be integrated into management strategy:

- Long-term Securing of Infrastructure: Reconstruct base strategies assuming wait times for grid connections and rising power costs.

- Responsive Investment in Efficiency: Prioritize the adoption of practical technologies (liquid cooling, Edge AI, PPA procurement) that maximize computational performance per watt.

- Management of Geopolitical Risks: Build resilient resource procurement networks that do not rely on specific countries or suppliers.

The success or failure of AI utilization is not determined by the software development perspective alone. Those who can accurately grasp "physical constraints"—such as grid capacity limits, hardware material acquisition, and national energy policies—and build business models adapted to them will rule the next-generation intelligence economy.