Eradicating Brand Inconsistencies : LLMO Strategies for Building an AI-Preferred Consistent Brand

In the AI era, inconsistent brand notation damages trust and lowers AI evaluations. This article explains LLMO (Large Language Model Optimization) strategies using mitsumonoAI to strengthen "Entity Recognition" and build a brand chosen by both AI and customers through consistent communication.

The Pitfalls of Brand Inconsistency in the AI Era

AI technologies like ChatGPT and Gemini are fundamentally changing how people find information and make decisions. These models recognize companies and brands as single "entities" by synthesizing vast amounts of web data. In this AI-driven landscape, inconsistent brand representation is no longer just a minor typo—it is a critical strategic risk.

3 Risks of Ignoring Brand Inconsistency

- The "Entity Recognition" Crisis

AI builds a brand profile by connecting fragmented information across the web. If your company name or core concepts vary across different channels, AI fails to recognize them as a single brand, causing you to disappear from search results and AI recommendations. - Loss of Trustworthiness (E-E-A-T)

Inconsistency signals to both AI and users that a brand is an unreliable source of information. This negatively impacts Google’s E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) evaluation, lowering your visibility and authority. - Operational Inefficiency and Blurred Brand Image

Constantly double-checking "correct" terminology slows down content production. Furthermore, inconsistent messaging confuses customers, ultimately diluting your brand identity in the marketplace.

LLMO (Large Language Model Optimization) Strategy with mitsumonoAI

mitsumonoAI eliminates brand inconsistency by teaching AI the "core" of your brand. This enables a "LLMO (Large Language Model Optimization)" strategy that ensures a unified message across every channel.

- Mission Registration: Define your USP, brand voice, and "do-not-use" expressions to establish a clear identity for the AI to follow.

- Consistent Content Workflow: Automatically check SNS posts and blog articles against your registered mission to prevent name variations and messaging drifts.

By positioning AI as a "dedicated brand manager" that maintains consistency, humans can focus on adding unique perspectives and emotional depth. This collaboration is the definitive way to strengthen your brand in the AI era.

[Practical Section] Step-by-Step Guide

LLMO operation using mitsumonoAI is a systematic approach to strengthening AI entity recognition. Here are three steps to achieving consistent communication through human-AI collaboration.

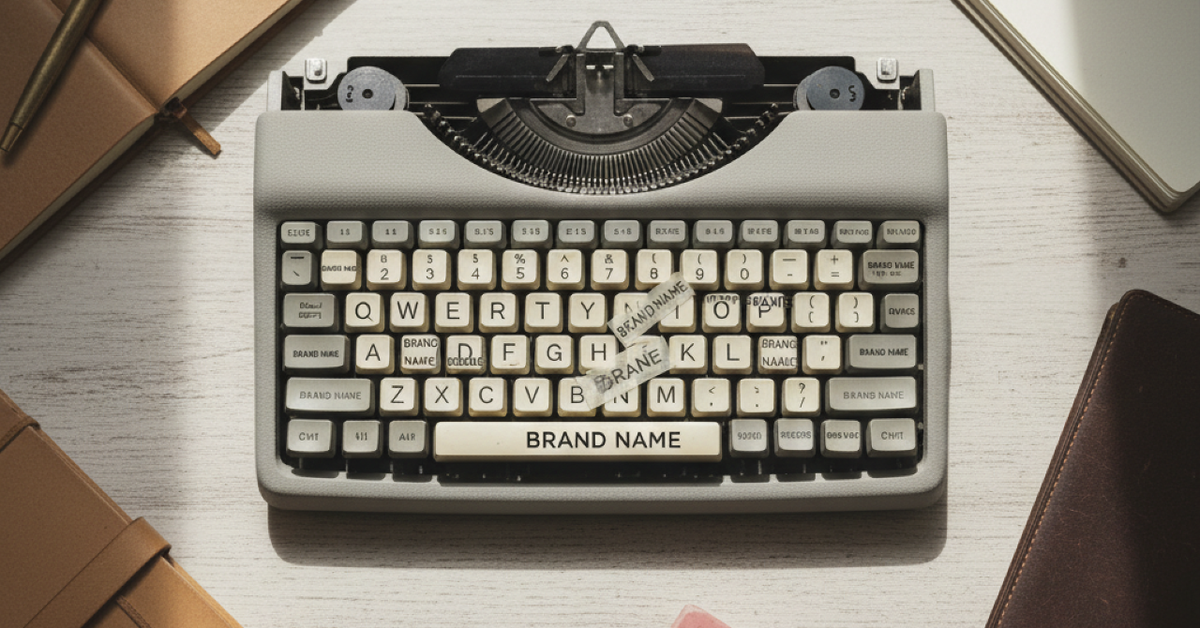

Step 1: Teach the "Brand Core" to AI via Mission Registration

First, use the "Mission Registration" feature to accurately teach the AI the most important elements of your brand.

[Preparation & Execution]

Open "My Mission" from the mitsumonoAI settings screen.

Define and input the mission, vision, and Unique Selling Proposition (USP).

Through this step, the AI builds a powerful foundation to recognize your brand as a consistent "Entity" rather than a mere sequence of characters. This is the first vital step in enhancing AI entity recognition.

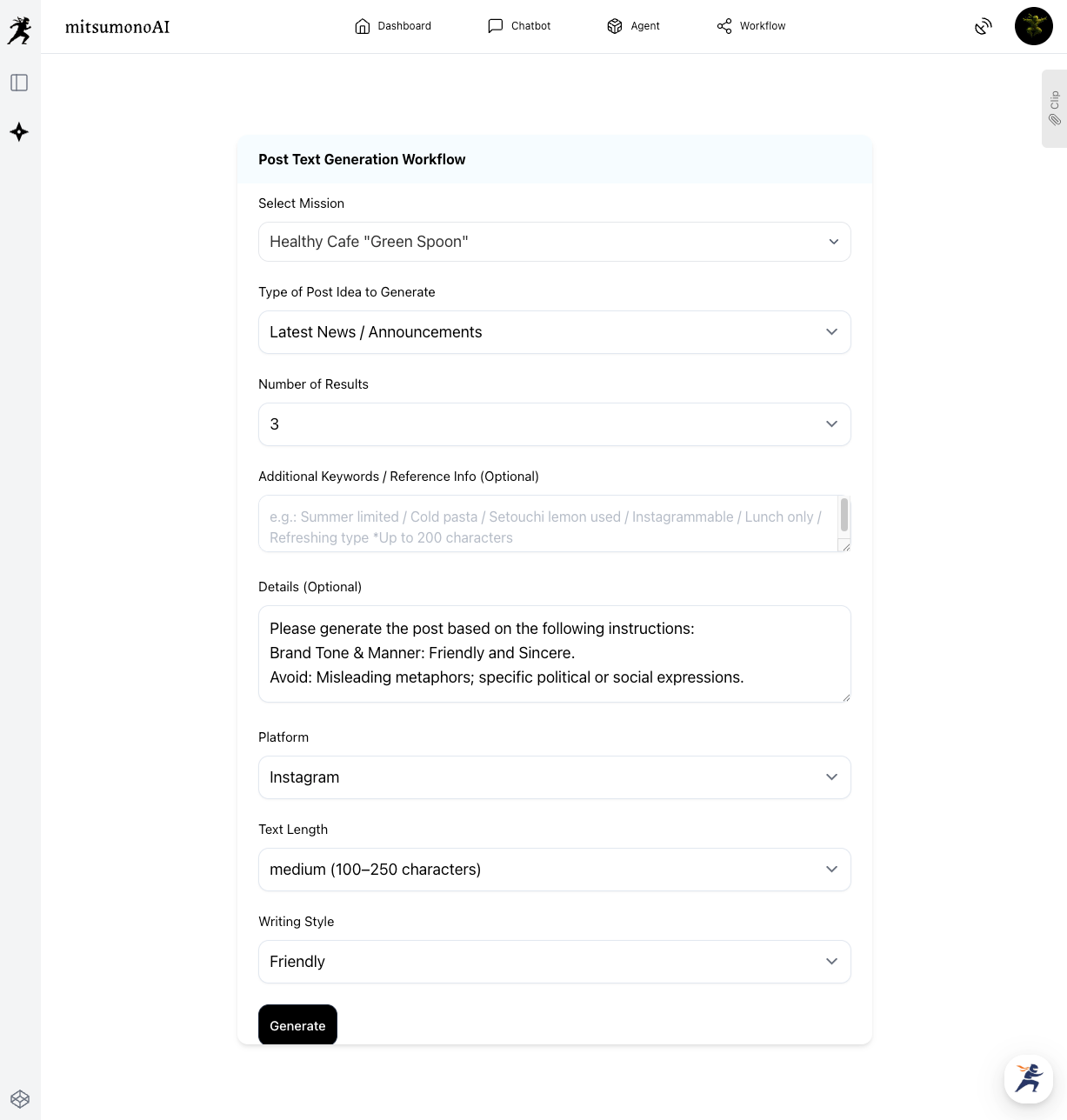

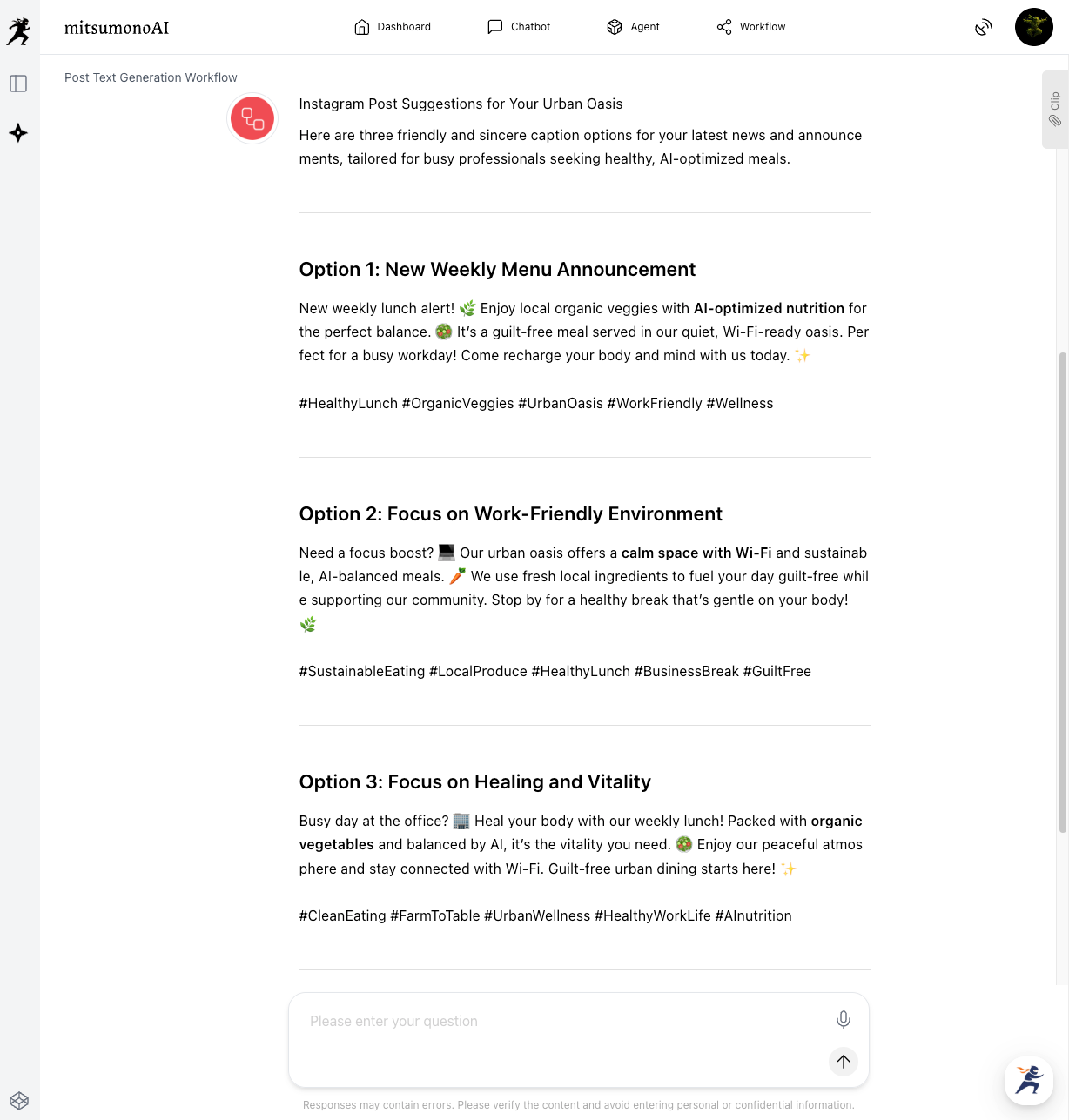

Step 2: AI Check & Correction via Post Text Creation Workflow

Once the AI has learned the brand core, use the "Post Text Creation Workflow" to have the AI automatically detect and assist in correcting notation inconsistencies in generated content.

[Preparation & Execution]

Launch the "Post Text Creation Workflow" and select the previously registered mission.

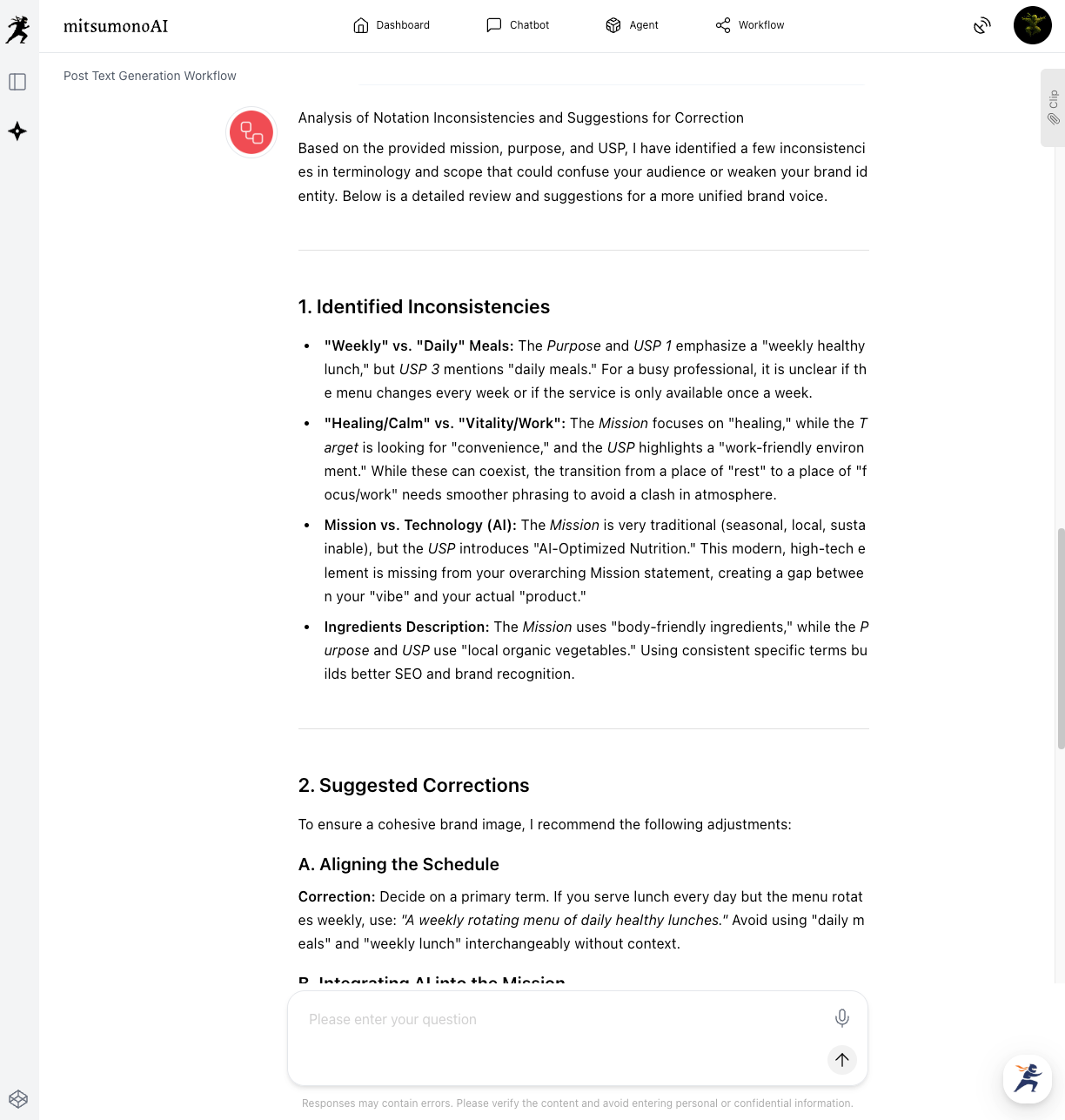

Verify consistency based on the mission info with a prompt like:

"Based on our registered mission information, please check for any notation inconsistencies and provide suggestions for correction."

In this step, the AI generates content while referring to the brand's "axis" and checking for inconsistencies. This significantly reduces manual human review time and ensures consistency from the earliest stages of content production.

Step 3: Human Final Review and Strengthening Consistency (HITL Practice)

Even after AI generation and checking, humans remain responsible for final quality assurance. In this step, a human reviews the brand nuances, emotions, or recent situational changes that AI might miss, providing "Accountability" to the content.

[Preparation & Execution]

A PR representative, marketing head, or brand manager reviews the content (e.g., SNS post drafts) generated and corrected by the AI in Step 2.

The HITL Process (Assigning Accountability)

The reviewer goes beyond just confirming AI suggestions and validates the content based on:

- Brand Voice Nuance: Is the brand's unique phrasing and emotion reflected appropriately?

- Alignment with Latest Info: Are there contradictions with recent changes or non-public information?

- Reflecting Strategic Intent: Does the content align with short-term and long-term strategic goals?

- Legal & Ethical Considerations: Are there potential legal risks or ethical issues the AI missed?

Through this step, AI efficiency and human accountability merge to maximize brand consistency. This solidifies AI entity recognition and makes customer trustworthiness unshakeable.

Applications and Expansion

This LLMO strategy for strengthening brand consistency can be applied across various business domains beyond single content pieces:

- Ensuring Consistency in Chatbot Responses: Generate and check customer service chatbot responses based on registered mission info to maintain a unified message across all touchpoints.

- Unified Corporate Culture in Recruitment: Generate and verify content for recruitment pages and SNS based on the mission to consistently convey corporate culture and values to candidates.

- Unifying Brand Names in Multilingual Content: For global expansion, unifying brand notation across languages is critical. Teaching the AI the brand core prevents inconsistencies across different languages and strengthens international entity recognition.

- Consistency in Internal Communication: Use AI to create consistent messages for internal training materials and PR, deepening brand understanding and cross-departmental collaboration.

Summary

This article has explained the risks of "notation inconsistency" in the AI era and introduced an LLMO strategy to strengthen "Entity Recognition" through HITL (Human-in-the-Loop) operations using mitsumonoAI.

- Inconsistent notation across diverse channels hinders AI entity recognition and lowers brand trust.

- Using "Mission Registration" and "Post Text Creation Workflow" in mitsumonoAI allows you to teach the AI your brand core and automatically ensure consistency.

- Combining AI efficiency with human final validation (HITL) realizes communication equipped with the "Trustworthiness" and "Uniqueness" essential for the AI era.

Unlike search engines, AI emphasizes whether a brand's consistency, transparency, and reliability are visualized alongside content quality. Move beyond "random" information sharing and build an unshakeable brand through strategic AI content operations based on data and human expertise.

mitsumonoAI is a business-specialized AI platform designed to simultaneously improve "work quality" and "speed."

Beyond LLMO strategies for brand consistency, it can be utilized to solve various business challenges, improve efficiency, and create new value.